Simple self-hosted

AI observability.

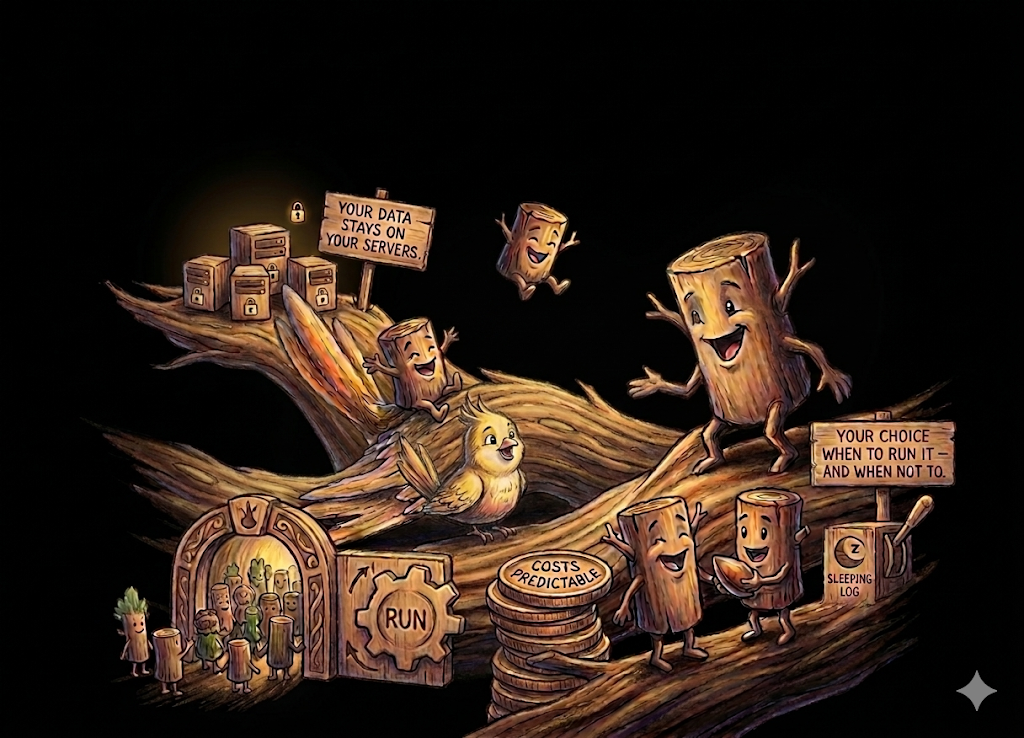

Ask in plain English. Get real answers from your logs, traces, and metrics. One Docker image. Your infrastructure. No seat limits.

Ask in plain English. Get real answers from your logs, traces, and metrics. One Docker image. Your infrastructure. No seat limits.

We're onboarding a small group of teams for early access. Pricing will be simple, predictable, and nothing like the per-host, per-GB model you're used to.